Alex is one of the XR influencers particularly active on the networks (Twitter, Youtube under the name of iBrews). His passions range from architecture to design, from Unreal Engine to theater: a perfect cocktail to explore the mysteries of today’s immersive creation. And to guess the ones of tomorrow.

Before the DK1 – First application of immersive ideas

Alex Coulombe – I studied architecture and theatre at Syracuse University in Upstate New York from 2005 to 2010. My thesis was creating a giant theatre out of Fort Jay on Governors Island, a little bit south of New York City. I needed a way to convey the magnitude and the insanity of what I was planning, which was kind of an early conception of immersive theatre. The idea was really to put an audience on stage. I found that with my traditional modeling tools in architecture, I couldn’t quite communicate that well enough. So I found an early form of augmented reality – just using a webcam and markers in 2009 to help convey my thesis.

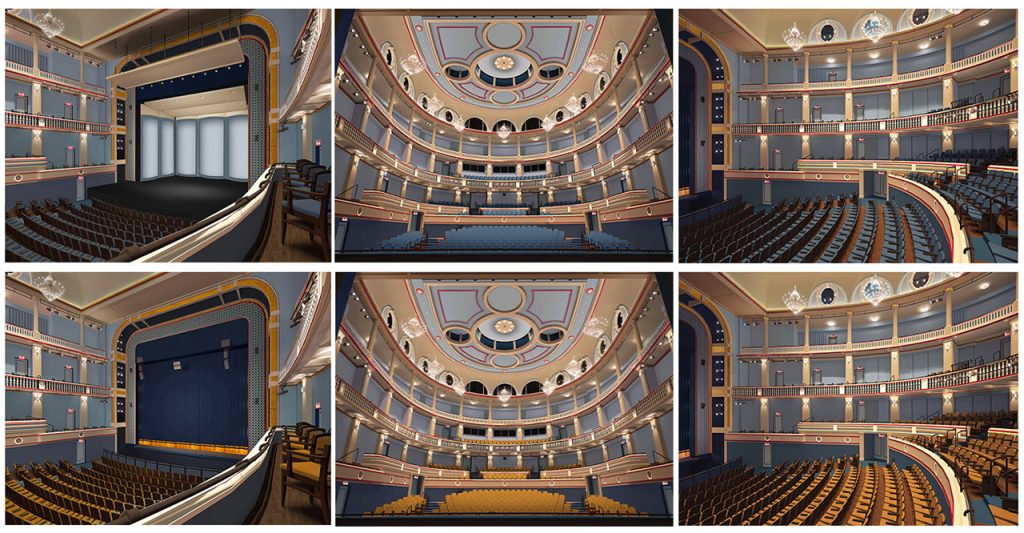

A. C. – Once I started working in architecture, I was trying to convince firms that there was value in using these tools like augmented reality and real-time technologies. In architecture you need to know what it feels like to move through a space – not just to see screenshots and to have that sense of compression and release, light and shadow, colour changes, etc. Then in 2013 I discovered Oculus’s Kickstarter, and I saw the potential of it. At that time I was working for Fisher Dachs Associates:Theatre Planning & Design, and so much time was passing where I was just trying to convey the spatial representation of something in meetings. And then the moment we started showing how our designs worked in virtual reality, the discussions became easy.

A. C. – Of course, in real-world architecture we have a lot of boundaries and limits. The cost per square foot (or meter) and how you deal with context, shadow and all the restrictions of physics. I love the world of virtual architecture and its inherent freedoms, but it’s actually the same thing! Virtualization has its own limits. Just different kinds of budgets and different kinds of limitations, based on the hardware (you may need your entire team, and clients, to use the same headset!) or the number of polygons inside your project. There are things you can do in virtual space that you can’t do in the real world – but still, at the core, choices should be guided by architectural psychology, space, movement.

About the evolution of real time engines for a creative designer

A. C. – “Virtual production” has become such a buzzword for the film and television industries – even video games to a certain extent – but it’s not a term that’s been used a lot for live events. I work in Unity and over the past couple years, more and more in Unreal Engine. I’m excited to see where Unity goes, now that they’ve bought Weta – which is such a remarkable acquisition! The fact is you can start to use these tools that are made for one industry – virtual production tools originally were created for the film industry, for example – and now fully integrate them for another industry, like live events. The work I’m involved with right now is “cherry picking” a lot of different techniques, stagecraft and elements that were not created to be live or to be in theatre. How do we make this totally real time with no cleanup, no post-processing and get the most we can out of the moment where the thing is actually being captured? That’s been incredibly fun.

A. C. – What’s most remarkable to me is how much easier it’s constantly becoming to be self-taught in tools like Unity and Unreal. They are very accessible, with great communities, trials, equipment costs, etc. The evolution since 2019, just using the Quest 2 after the Quest 1, is incredible. I’m also a teacher, and I see this with my students – how much faster people can get upon their feet, learning how to create compelling immersive experiences. It becomes less and less of a technical challenge and more about the creative idea. It just seems like we can go after the right immersive medium for every idea (painting, video game, book…), and there’s fewer and fewer steps to actually getting from that idea to something that people can actually experience and be a part of.

A. C. – If you take VRChat, I see a lot of (really) passionate people there. But they haven’t taken the time to learn the craft of space making. I want to encourage everyone to go out and try everything they want, and I also want them to learn the fundamentals, study their craft, and get better.. “Learn the rules like a pro, so you can break them like an artist,” said Picasso. “Otherwise it’s just a lot of broken things that don’t serve much purpose” – I added that last part. Picasso started out painting very realistically – and then became more and more abstracted as he went on. In the end he didn’t need the rules, but it was important he knew them.

A. C. – I’m so grateful for how quick we can iterate in VR. I’m very, very frustrated by the limitations of the real world, and things related to bureaucracy, budgets – and all the different stamps of approval that everything needs. With virtual reality I can have an idea right now and by the afternoon I can show it to my children and wife, to people immediately around me – or even online to share with everyone. This is something that’s totally unprecedented and remarkable – both from a creative perspective and a functional one. We get to create experiences that have utility, whether it’s about training, application or just a way for people to connect.

Replicating the real world in virtual reality

A. C. – I think we have to create a new (virtual) world. I love the word “skeuomorphism” (editor’s note: term most often used in graphical user interface design to describe interface objects that mimic their real-world counterparts in how they appear and/or how the user can interact with them – www.interaction-design.org), which is replicating things from the real world, because that’s where the familiarity is. When you take a theatre environment, there’s a very understood ritual of how it works. There’s value in replicating things from the real world, because it makes sense to people. During a theatre show you know you should be quiet and pay attention to the actors, but when you’re in a virtual world there’s other things that don’t make as much sense: to tell the audience to stay seated in one spot and never change their perspective, for example. If they can fly, if they can move around to scale very far down and get up close to an actor’s face and see all the nuances of their expression, that should be taken proper advantage of.

A. C. – Why shouldn’t they do that and take advantage of the affordances of these new mediums? The tricky thing is doing that in a way where people don’t feel too overwhelmed or lost. I’m sure, like cinema or touchscreen devices, we’re going to find that early on there’s a lot of mimicking of the real world for comfort and familiarity. Remember, early cinema was filming one angle, no cuts or wide shots or closeups…the language of cinematography needed to be developed over time. It’s the same for how early apps were basically websites! Both of those are new languages that had to develop, separated by about 100 years. As time goes on we discover ways we can really take advantage of the affordances of these new immersive mediums,move away from the familiar and do something totally new. What we see today about virtual and augmented reality is only going to expand as this technology becomes more ubiquitous.

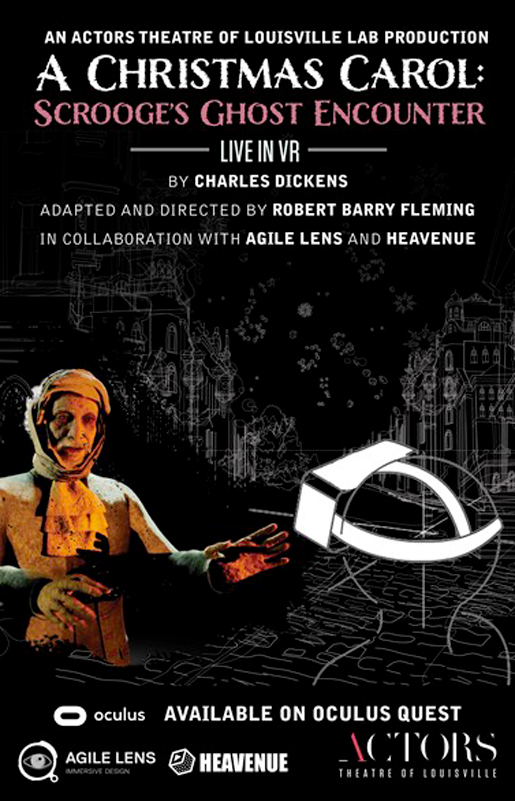

Live-performance theatre in VR and A CHRISTMAS CAROL: SCROOGE’S GHOST ENCOUNTER

A. C. – I loved what Sarah Ellis and the Royal Shakespeare Company did with the virtual production of DREAM. They did such a lovely job of finding ways to take motion capture technology and broadcast that live. The only frustration was that I wanted to be in virtual reality during the show because it was such a beautiful world. And I saw it five different times with my kids! My kids love virtual theatre. They’ve gone to stuff in THE UNDER PRESENTS/TEMPEST, WELCOME TO RESPITE, TINKER, METAMOVIE: ALIEN RESCUE, ONBOARDXR (see our interview) and all sorts of wonderful VR shows. We’re taking a lot of inspiration from what we really loved about these for this production of A CHRISTMAS CAROL: SCROOGE’S GHOST ENCOUNTER LIVE IN VR with Actors Theatre of Louisville (link). There is freedom to be wherever you want during the show. If you want to watch Scrooge, you can be two inches away from him. If you want to go explore the environment over there, by all means do it. The medium of virtual reality really lets you have that freedom of things you can’t do in the real world. It’s really fun to find those places where we can deviate from reality.

A. C. – I’ve always been excited about how technology can help theatre. I wrote a play in high school that was more like a 45 minute musical, using projection mapping. I just wanted to figure out how we could milk the limited technology (school projectors) we had at our disposal in the most meaningful way possible. Then in college I co-founded a theater group called the Warehouse Architecture Theatre, which is still at Syracuse. When I went from using virtual reality to designing theatre, I thought there must be some ways that this technology can be used to help us pre-visualise shows coming up. I worked on the Shed (a new building in New York City you can see in the HBO show Succession), and we had that same approach to virtually design the building but also imagine what certain shows in that event space might actually be like.

Working around VR and the theatermakers community

A. C. – In 2018 I was speaking at a conference in Boston with Philip Rosedale (founder Second Life, co-founder High Fidelity VR) and we began to chat about the potential of live events in VR. He asked me if I could assemble a coalition of New York city-based theatermakers and start to explore what works and what doesn’t in virtual reality performance. That small coalition of talented friends grew into a supergroup featuring the talented friends of talented friends and so on and so forth until it eventually became known as Alive in Plasticland (link). Then after High Fidelity pivoted to spatial audio, some of us continued further explorations across Mozilla Hubs, VR Chat, Altspace, Rec Room etc. These are all free social VR platforms and are not tailored towards putting on live events. On the other end of the spectrum are events happening in Fortnite and Wave. They are beautifully produced – but they’re custom productions with multi-million dollar budgets. Forus in the theater community, that’s unattainable.

A. C. – That’s where myself, alongside co-founders Or Waknin and Eytan Manor, decided to develop a new platform, Heavenue (link). It’s a venue in the cloud, a performance platform that is geared towards all scales of budget, resources and technology. We are trying to make it as easy as possible for creating a compelling show that anyone around the world can see with any internet connected device, including a $300 VR.For A CHRISTMAS CAROL LIVE IN VR we are developing a world with millions of polygons. How can we put everything together? Because we use cloud computing, and we’re allowing everyone to access computers on the internet that are 100 times more powerful than what their standalone device can do. All they need is a strong (enough) internet connection to send that signal back and forth. For VR, they’re getting to see an experience that most of them, I’m sure, will never have experienced on Quest, especially if they don’t have a powerful desktop computer, because we’re broadcasting something that is so beyond the capabilities of what that mobile chipset can handle.

A. C. – I curate content about these topics for the Theatre Communications Group (TCG – link), the largest gathering of theater professionals in North America. It’s where it is in 2019 (the ‘before times!’) I met with the Actors Theatre of Louisville. As they were very excited about the possibilities of live events in VR, they reached out just a couple months ago because they wanted to share their upcoming production of A CHRISTMAS CAROL across the world, and it was the perfect case to try Heavenue. Robert Barry Fleming, the director, has this wonderful vision of interpreting this version of A CHRISTMAS CAROL, the Charles Dickens recital, where Ari Tarr is playing many characters, speaking to the audience and interacting with the ghosts. This show is really looking at the story from a new angle, and is taking advantage of this medium really well. We have these terrifying ghosts, these environments that you could never build in the real world – and we have this incredibly talented actor who I’ve been following for years. I can’t wait to experience all the nuances of his performance two inches away from his wrinkled old Scrooge avatar.

https://www.youtube.com/c/ibrews/featured

Leave a Reply

You must be logged in to post a comment.